In the first two episodes of this blog series, we saw how we put in place an efficient formal testbench for a cache, how we found a genuine bug, reproduced a deadlock bug and found a design fix. At this point we were confident that no other deadlock bug exists. This episode shows how we verified powerful assertions, and how we prepared the formal testbench to verify end-to-end properties (an end-to-end property checks the DUT outputs against what has been seen on its inputs), along with new best practices.

An essential property

Actually, let's start with a property that is not end-to-end but that is essential for a cache. This property is the only one we will have to check internal details. It verifies that, upon a hit request in the cache, the hit is in only one way. If that is not respected, there is a strong ambiguity about which data to read or write.

hit_onehot: assert property(i_ucache.i_hit_stage.is_hit |->

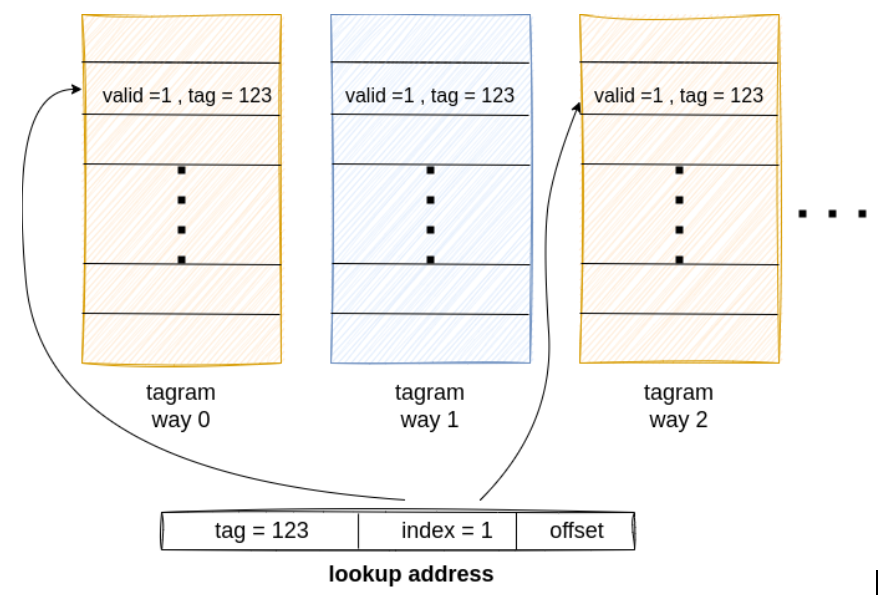

$onehot(i_ucache.i_hit_stage.hit_way);When a lookup is performed in the cache, the address is split into a tag (123 in the following figure), an index (1), and an offset. The index is used to address tagram ways. If the content of this index is valid and the tag is the same in two ways (0 and 2 below), this is a "multiple hit", and a serious problem. Many potential design bugs can be seen as a violation of this property.

However, several constraints are needed to discard false failures on this assertion. As explained in the first episode, we abstracted the different RAM arrays, including the tagram. It means the cache reading in the tagram gets "random" results. This is not good at all and makes the assertion quickly fail.

Introducing the CAM component

This is where the Content Addressable Memory (CAM) comes into play. A CAM is a reduced view of a memory. Instead of being able to hold thousands of cells, one for each address, it holds only a handful of selected addresses, however these are left unconstrained. This is actually some kind of a fixed-length associative array, where the length is much smaller than the size of the real array.

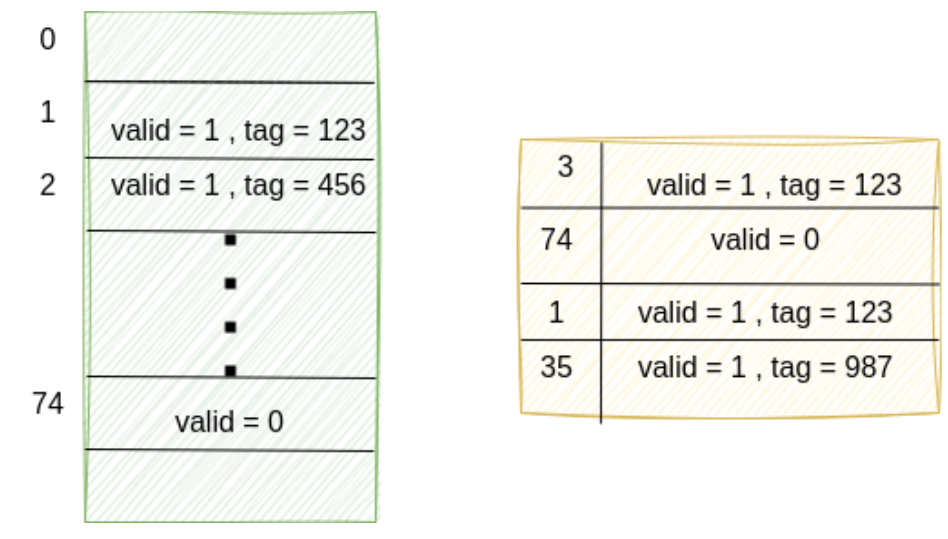

The following figure shows a real tagram on the left and an abstraction of it as a CAM on the right.

You can see that the real tagram contains a valid tag at index 2. But this is absent in the CAM. To avoid false failures because the cache reads at index 2, we simply constrain all reads (and writes) to be at indexes which are present in the CAM:

request_in_cam: assume property(request |-> there_exists_one_in_cam(req_index));This is a strong overconstrain that we mitigate by correctly sizing the CAM. To do so, we define cover properties which identify how many different indexes are used. The proof of these allows to determine what CAM size is too large to be fully used because the formal analysis becomes too complex. We usually size the CAMs with the smallest size that FV is not capable of fully using.

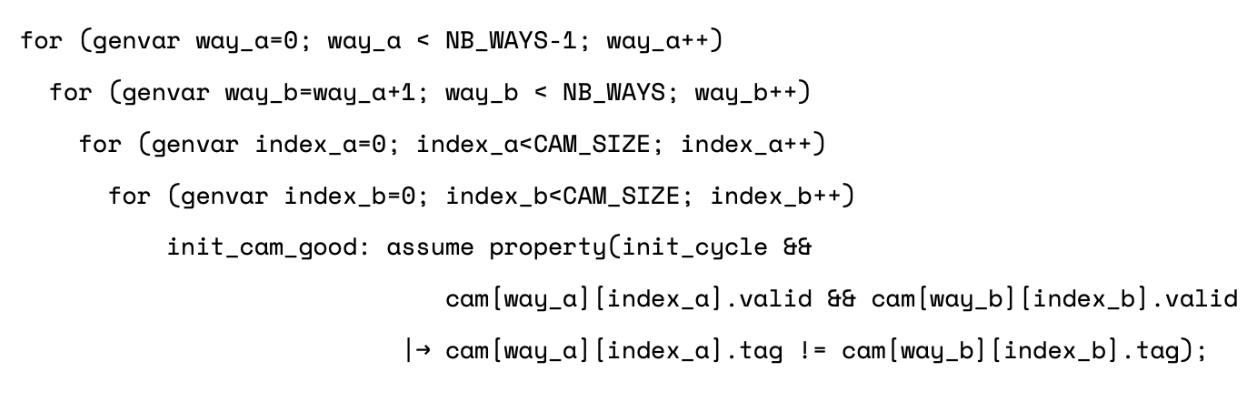

We use one array of CAM instances to represent all tagram arrays. Additionally, using a CAM allows to abstract the initial content of the cache. We just have to let the values in the array be unconstrained. Actually, not fully unconstrained! The hit_onehot assertion will still fail with a simple scenario: the read request comes in, and it hits in two ways because the initial state of each CAM allows two ways to have the same valid tag at the same index. We need to add constraints which apply only at the first cycle out of reset:

This adds a lot of complexity to the formal analysis, so use these constraints only when required.

Unfortunately we were still not done with the hit_onehot assertion. New constraints using the content of the CAM were needed:

- The cache must not get "non-cacheable" requests for addresses already present in the CAM.

- We also needed to model the dirtyram arrays with CAMs, and make the content of the tag and dirty CAMs coherent (i.e. a dirty line must be valid).

- The addresses in the CAM must be outside of the memory mapped register range.

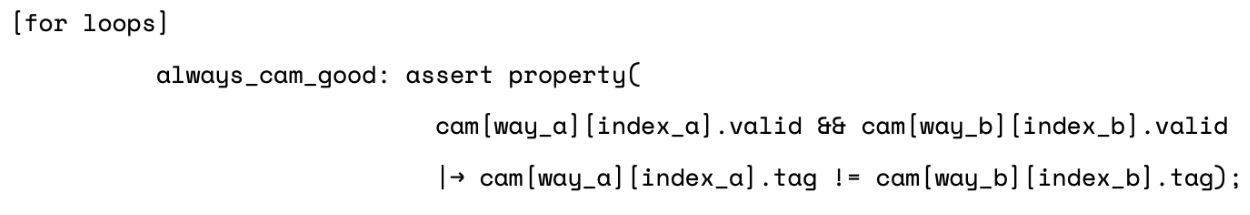

Some of these constraints are used to make the initial content of the CAM correct. We can also use very similar properties as assertions to check any cycle. This is done by simply removing the "init_cycle" term:

Be afraid of the dead-end states!

As mentioned, we need to constrain the requests reaching the tagram (actually the CAM) so that they target only indexes present in the CAM. This has a hidden drawback.

To illustrate it, let me first summarize what is "deep bug hunting" in FV.

Deep bug hunting

There are different approaches and all are "semi-formal", which means they are not exhaustive. Instead, they are excellent at finding failures.

The main technique uses trace ends to start the formal analysis in addition to starting it from the reset state. The first traces are generated for user-defined cover properties, or for automatically generated cover properties. Then, from the last cycle (or the few last cycles) of this trace, another formal analysis is executed, finding other traces, which are used to start other formal analysis, etc. And they may also find bugs. This approach is able to reach bugs which need lots of cycles to appear, and which are not accessible with standard (exhaustive) FV.

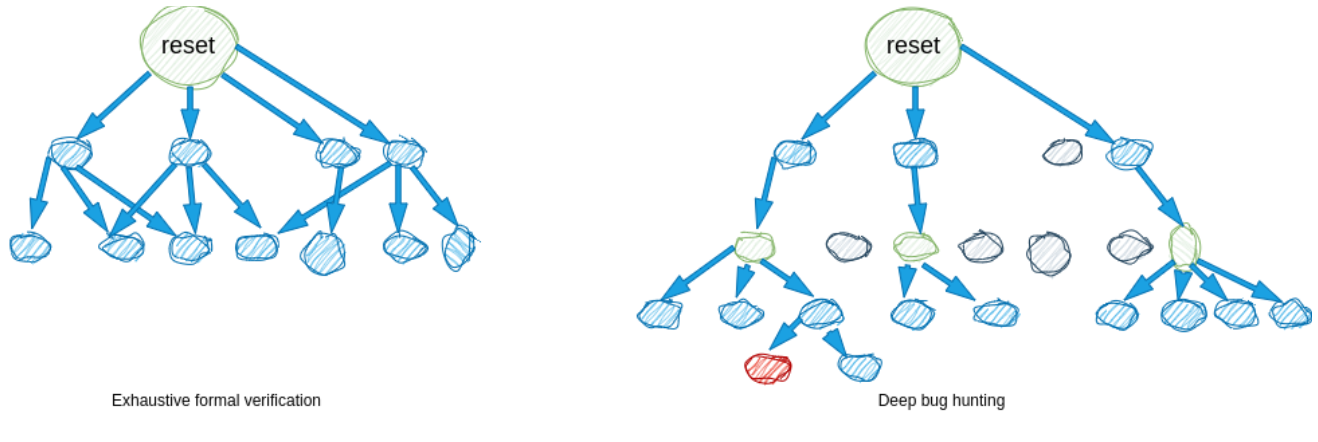

This is illustrated below with the exhaustive FV starting exploring from the reset state only, and reaching its limits after having explored all states up to a few cycles. Instead, deep bug hunting starts exploring from the reset but also from some other states (in green), and can go deeper in the design, possibly hitting buggy states (in red), but also missing some states (in grey).

With deep bug hunting, when a new formal analysis is started from the end of a trace, the trace prefix is frozen. What we call a "dead-end state" is one from which no following state can be reached because some constraints apply afterward. And the more dead-end states you have, the less efficient your deep bug hunting will be.

How you can get rid of dead-end states

Suppose the cache receives a request for an address A. Maybe this request will be buffered for several cycles. Then, this request triggers an access to the CAM, at index I which depends on A. The constraint request_in_cam applies and makes many dead-end states because I is not present in the CAM. A request targeting A should have been avoided several cycles earlier.

The dead-end states problem can be greatly mitigated by following this simple rule: constrain things as soon as possible. In this example, it consists in constraining the addresses on the input of the cache so that, for cacheable requests, only the ones with an address matching in the CAM are issued.

Adding CAMs for tagram and dirtyram, and the associated constraints, is not easy. It must be iterative, adding abstractions and constraints only step-by-step when debugging failures of an important assertion such as the hit_onehot one. See it as an investment, however. You will see that we used it for the rest of the cache verification.In the end, we did not get any failure for hit_onehot, but also no proof even after a very long time. This is not surprising because this assertion really verifies almost the full control of the design (as seen when analyzing the coverage... in the next episode). However, manually adding some nasty bugs, all could be quickly seen as a failure on this assertion, which is a good sign.

Takeaways

Oh, what a dense episode! Let’s recap.

We’ve seen how we greatly enhanced (or complexified!) our formal testbench. This was required to verify an essential control assertion, and to later be able to verify data integrity assertions. Here are the best practices we have identified.

In the next episode, I will show how this formal testbench can be used with very minor additions to verify the main functionality of the cache, with end-to-end properties. So, see you next week!